USAID, September 2018. 98 pages. Available as PDF

Rick Davies comment: A very good overview, balanced, informative, with examples. Worth reading from beginning to end.

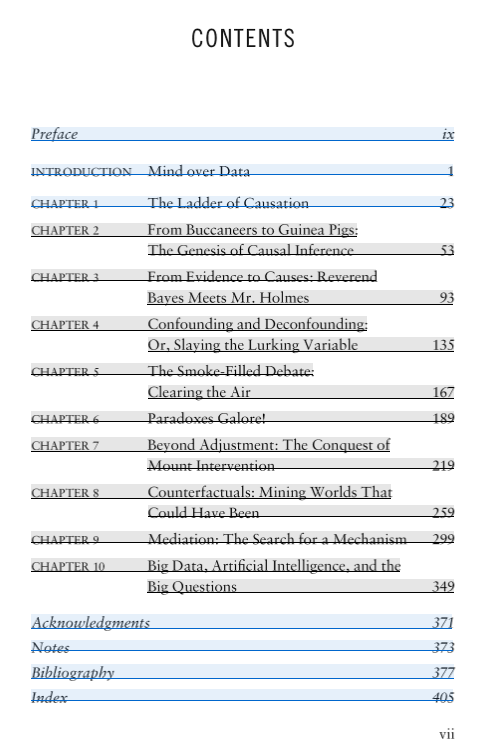

Contents

Introduction

Roadmap: How to use this document

Machine learning: Where we are and where we might be going

• ML and AI: What are they?

• How ML works: The basics

• Applications in development

• Case study: Data-driven agronomy and machine learning

at the International Center for Tropical Agriculture

• Case study: Harambee Youth Employment Accelerator

Machine learning: What can go wrong?

• Invisible minorities

• Predicting the wrong thing

• Bundling assistance and surveillance

• Malicious use

• Uneven failures and why they matter

How people influence the design and use of ML tools

• Reviewing data: How it can make all the difference

• Model-building: Why the details matter

• Integrating into practice: It’s not just “Plug and Play”

Action suggestions: What development practitioners can do today

• Advocate for your problem

• Bring context to the fore

• Invest in relationships

• Critically assess ML tools

Looking forward: How to cultivate fair & inclusive ML for the future

Quick reference: Guiding questions

Appendix: Peering under the hood [ gives more details on specific machine learning algorithms]

See also the associated USAID blog posting and maybe also How can machine learning and artificial intelligence be used in development interventions and impact evaluations?